🧵 AI Fairness 101 — Real-World Incidents: When Welfare Automation Becomes Automated Prosecution

How Michigan’s MiDAS System Falsely Accused Tens of Thousands of People of Fraud

📊 A Fraud Detection System That Treated Errors as Crimes

Over the past decade, governments have increasingly turned to automated systems to detect fraud, reduce costs, and modernise welfare administrations.

The promise is simple: faster detection, fewer false claims, and more efficient use of public funds.

But when fraud detection systems are designed without safeguards for due process, automation can quietly transform administrative oversight into automated prosecution.

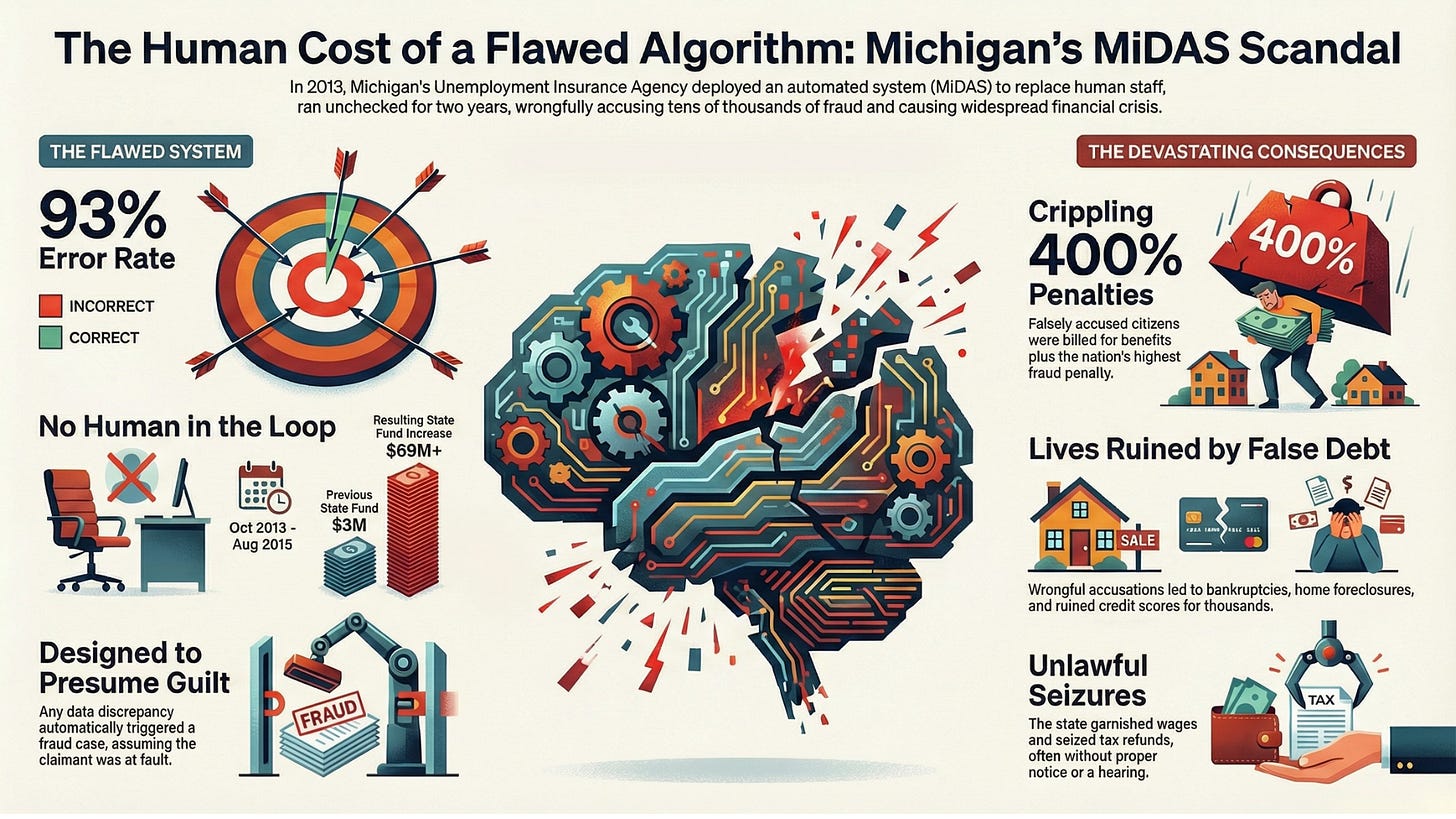

Between 2013 and 2015, Michigan’s system for detecting unemployment fraud — MiDAS — falsely accused roughly 40,000 people of fraud.

Later audits would reveal something extraordinary.

The system was wrong about 93% of the time.

This was not a rare edge case.

It was systemic failure by design.

📖 When Efficiency Becomes Enforcement

MiDAS was built to replace manual fraud investigation with automated decision-making. The system cross-checked unemployment claims against employer records and flagged discrepancies automatically.

But the system did not treat discrepancies as signals for investigation.

It treated them as proof of fraud.

Claimants were notified through online portals— often for claims that were years old. Many never saw the notices. If they failed to respond within tight timelines, the system issued final fraud determinations automatically.

There was no meaningful human review.

This wasn’t automation supporting governance.

It was automation replacing adjudication.

🎥 Explainer: The MiDAS Algorithm and the Collapse of Due Process

[Michigan’s MiDAS Algorithm Scandal Explainer Video]

🔍 The Most Dangerous Failure Was Not Technical — It Was Institutional

Most public discussions about algorithmic harm focus on:

Bias in training data

Model transparency

Explainability

All important.

But MiDAS exposed a deeper risk.

What happens when governments automate decisions that legally require human judgement?

Fraud is not just a statistical pattern.

It is a legal determination that requires intent.

MiDAS attempted to automate intent detection—and in doing so, it replaced investigation with presumption.

Once deployed, the system:

Seized tax refunds

Garnished wages

Issued massive financial penalties

Triggered bankruptcies and loss of housing

This occurred prior to any meaningful human review.

This was not automation assisting justice.

It was automation bypassing it.

🌍 Why This Case Matters Globally — Not Just in the United States

MiDAS is often framed as a U.S. administrative failure.

It is more accurately an early warning signal for all governments deploying automated enforcement systems.

As welfare, taxation, and public benefit systems become more automated globally, similar pressures emerge:

Reduce fraud faster

Cut administrative cost

Demonstrate fiscal discipline

Scale oversight across large populations

But if system objectives are misaligned, automation doesn’t just scale efficiency.

It scales enforcement power.

And when error rates exist—and they always do— automation multiplies harm faster than traditional systems ever could.

👉 In the Full Analysis

I break down:

How design assumptions encoded presumption of guilt

Why removing human review created constitutional risks

How automation bias allowed the system to persist despite warnings

What safeguards governments must build before deploying automated fraud systems

👉 Read the full case analysis here:

[The Golden Touch of Ruin: How Michigan’s MiDAS Algorithm Falsely Accused 40,000 People of Fraud — globalsouth.ai]

👉 Download the Michigan MiDAS Scandal Case Deck (PDF)

The Real Governance Lesson

MiDAS is often described as a technology failure.

It is better understood as a governance failure — one where policy pressure for efficiency removed the safeguards that make administrative systems legitimate.

When the state automates enforcement without maintaining due process, technology ceases to function as administrative infrastructure.

It becomes coercive infrastructure.

If you work in public sector AI, regulatory design, welfare automation, or digital governance, this case is not history.

It is just a preview.