AI Fairness 101: 🏛️ Algorithmic Justice Under Scrutiny: Lessons from the COMPAS Debate

How COMPAS exposed the limits of “objective” AI — and why fairness in automated justice is ultimately a policy choice, not a mathematical certainty.

⚖️ When Algorithms Help Decide Who Goes to Jail

AI was supposed to make justice more consistent.

Instead, the COMPAS scandal exposed a harder truth:

Fairness in algorithms is not a technical setting. It is a political choice.

📊 What COMPAS Was Supposed to Do

Courts across the United States adopted COMPAS to predict recidivism risk and guide decisions on bail, parole, and sentencing.

The logic seemed simple:

Replace inconsistent human judgement.

Use data to assess risk.

Improve public safety.

But the system revealed a deeper conflict: you cannot define fairness without deciding who bears the cost of error.

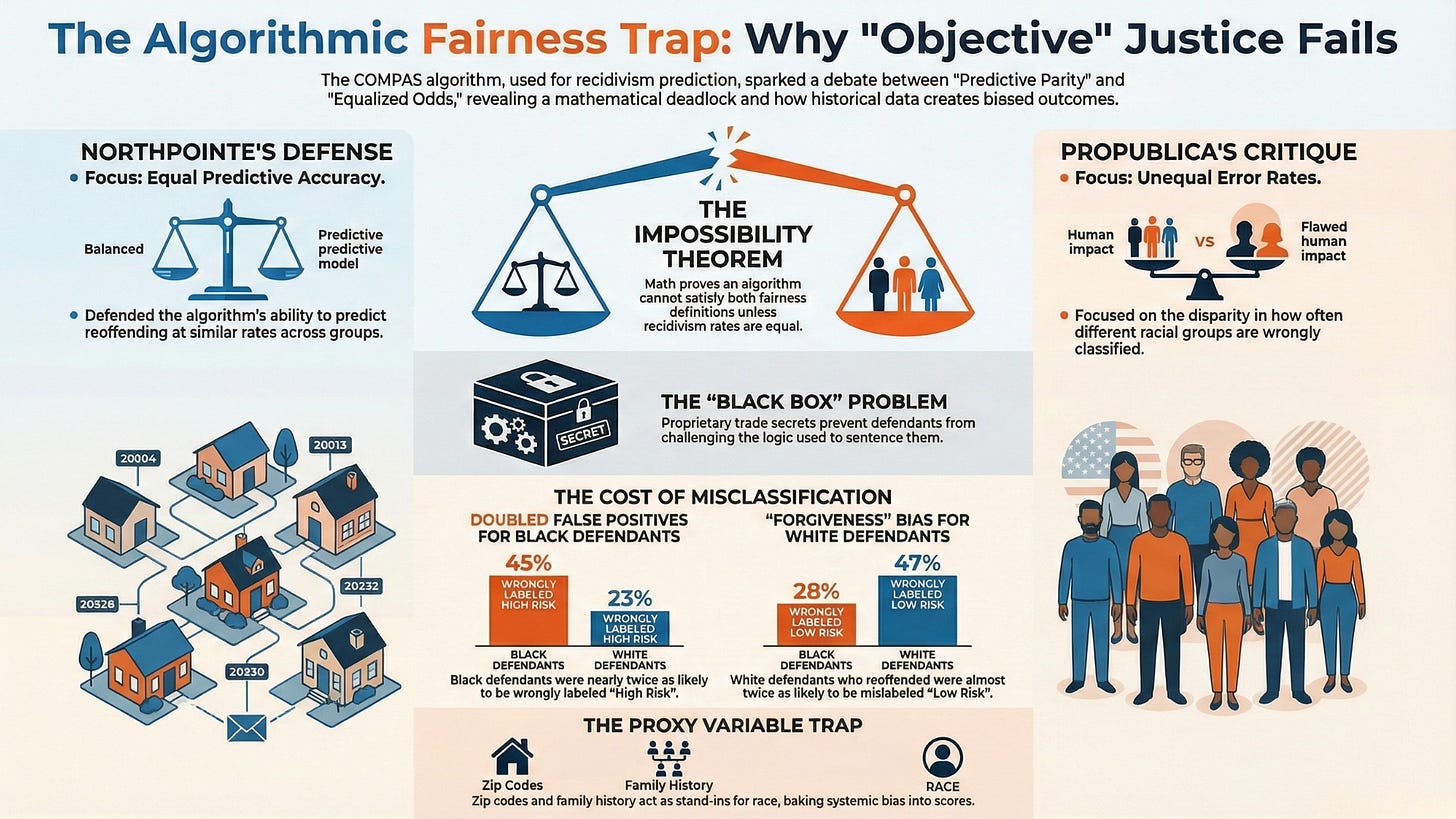

⚠️ The Fairness Dilemma That Broke the Narrative

Two claims emerged from the same dataset:

ProPublica: Black defendants were nearly twice as likely to be falsely labelled high risk.

The developer: The model was fair because risk scores meant the same across groups.

Both statements were statistically valid.

This is the fairness impossibility problem:

You cannot satisfy equal error rates and predictive parity at the same time when base rates differ.

When fairness definitions collide, the system doesn’t fail.

People do.

🔗 When Statistics can lead to Criminal Sentences

A “high risk” label can mean:

Higher bail

Denied parole

Longer incarceration

In Broward County data:

45% of Black defendants flagged high risk did not reoffend

23% of White defendants faced the same false label

These are not abstract metrics.

They are lost years, broken families, and reduced life chances.

🎥 Explainer: The COMPAS Algorithm Scandal

🧬 The Real Problem Wasn’t the Algorithm — It Was the Data

COMPAS avoided using race directly but relied on proxies:

Zip codes shaped by segregation

Arrest histories shaped by over-policing

Social stability measures shaped by inequality

When historical bias becomes training data, algorithms don’t remove injustice.

They standardise it.

🌍 Why This Matters Beyond the United States

COMPAS is often framed as the U.S. controversy.

It is actually a global warning.

Risk models built on Western social assumptions are now exported into:

Welfare targeting

Credit scoring

Policing

Migration control

In contexts where informal work, displacement, and structural inequality are the norm, these systems can scale exclusion under the guise of modernisation.

❓ Questions We Should Be Asking

Who decides which fairness definition governs liberty?

Should proprietary algorithms be allowed in courtrooms?

If a model is only marginally better than chance, why is it used to determine freedom?

What happens when these systems are exported to countries with weaker legal safeguards?

📌 In the Full Article

We break down:

The mathematical conflict at the heart of algorithmic fairness

How proxy variables encode structural inequality

Why trade secrecy undermines due process

What the Global South should learn before adopting similar systems

👉 Read the full analysis:

The COMPAS Algorithm Scandal: When AI Decides Who Goes to Jail

👉 Download the COMPAS Algorithm Scandal Case Explainer Deck (PDF)

🌍 Why This Series Exists

This post is part of AI Fairness 101—Real-World Incidents, documenting how algorithmic systems shape real lives across justice, welfare, finance, and governance.

Because before we automate decisions at scale, we need to understand what they automate.

💬 Join the conversation

If you work on AI policy, risk, or public systems, your perspective matters.

Reply, share, or reach out — the next standards are being written now.